This topic could be of interest to a wide variety of our users, but I decided to put it here because I guess it’s mostly a software issue rather than applicable to any specific machine. My plan is to add some additional links to some short videos as well as some screen shots of parts of the process.

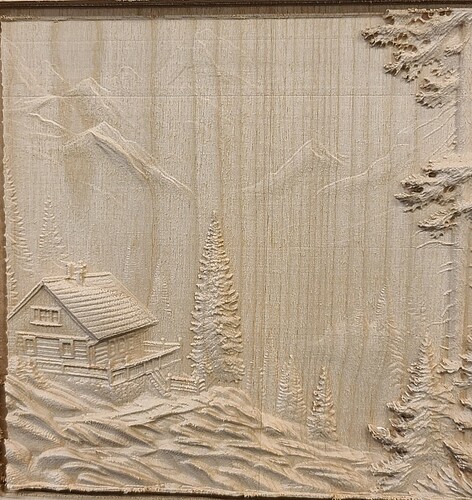

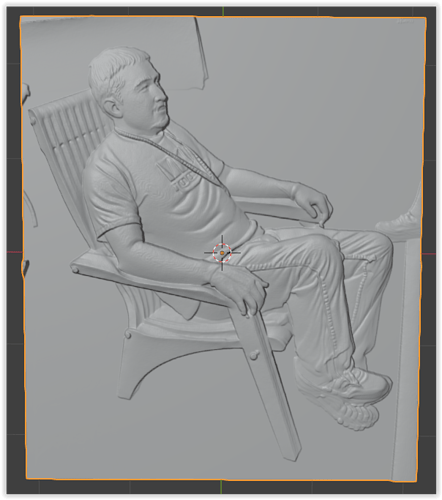

To begin with, some of you are aware that while I’ve used my LR4 to cut the parts for the SImba Chair, a lot of my work on both the LR4 and the MPCNC (Primo) have been bas relief carvings. For many years I’ve been interested in methods for enhancing other woodworking projects such as boxes and tables with things like carving, inlay and marquetry.

Since I’m not too artistic, my efforts at carving have largely been limited to using existing patterns from others to create this type of artwork. Some of it has given excellent results, and have been posted here in various places.

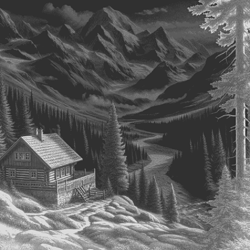

However, for a while now, I’ve been exploring the use of AI to assist in the creation of patterns that are useful for bas relief artwork that can be fabricated on the MPCNC class of machines.

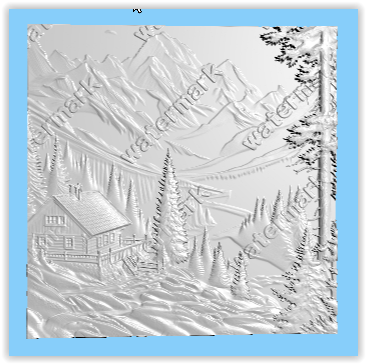

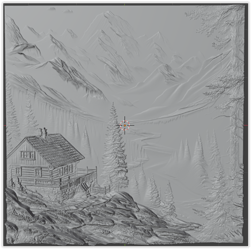

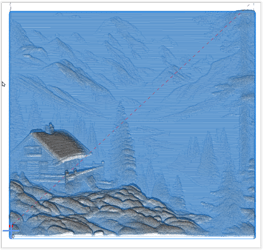

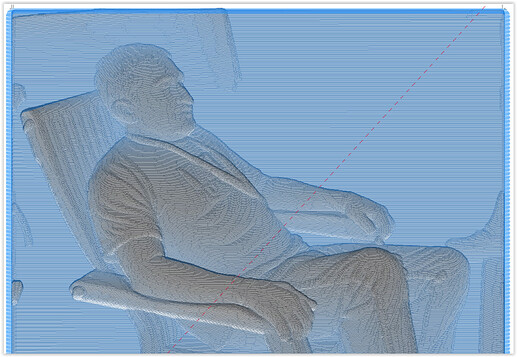

For this type of carving, the essential challenge is to create a grey scale depth map that the CAM software can use to generate the tool paths and g-code. I happen to use ESTLCAM, but I believe all of the available software tools have similar capabilities.

However, this process is more difficult than it would appear since in a typical image such as a photo, there is ZERO depth information. Even if the image is converted to greyscale, the depth info is missing. (Note that the common lithophane actually is not a depth image. The grey level is based entirely on the material thickness.)

Unlike a lithophane, a typical depth map has a relationship between grey level and image depth, with lighter tones being closer and darker being farther away. (Consider a white dog with a black nose looking at the camera. The nose is closest to the viewer so in a depth map it should be the lightest tone while in a lithophane, it’s the darkest. )

Until recently, there weren’t any very good tools to help generate a grey scale depth map. In reality, it required an extensive amount of editing using tools such as Blender or Photoshop to manually create a depth map suitable for CNC carving.

However, in the last year or so, there have been some interesting developments that promise to put the creation of original patterns for CNC carving at our fingertips. My approach to using these tools is based on using AI to assist with the creation of a depth map followed by using Blender to optimize the image for carving.

I’ll be creating several sections of this discussion to avoid excessive length, but, as a bit of a “teaser”, here’s an original creation that was carved on the LR4 of a cabin in the mountains.