Hi! Thanks for looking into this. Hope you’re feeling better from the food poisoning - that stuff can really knock you out and linger for days.

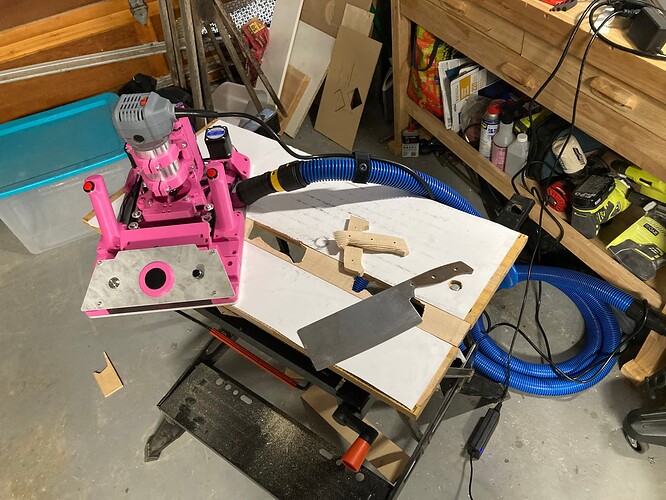

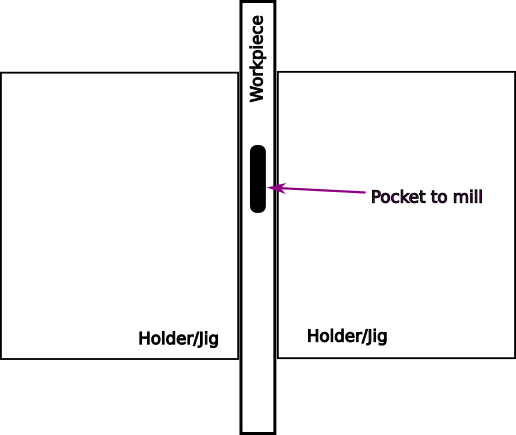

You brought up an excellent point that highlighted my blind spot. I completely forgot we’re working with an XY gantry system, so you’re absolutely right - we’d only need one sensor. The system can simply move that single sensor to perform both X and Y scans, operating like a flatbed scanner with a back-and-forth raster pattern.

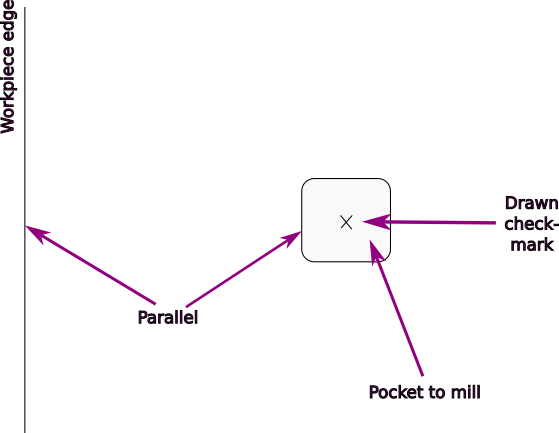

We can efficiently start with a coarse scan of the first 5cm area to locate the general intersection, then do a detailed scan of targeted points around that area. The more measurement points we collect, the more accurately we can determine the true center. Throughout this process, we’d log the precise X and Y coordinates at each measurement point.

Knowing the crosshair position is only half the battle though when I think about it. If the router bit doesn’t cut where the system thinks it should, all that precision becomes offset as well, do you already have a way to calculate the router bit offset?

My idea for solving this - 4-Corner Router Bit Offset Calibration:

- Scan crosshair to establish reference position (X₀, Y₀)

- Cut 4 reference holes at known ±10mm offsets from center

- Scan the actual hole positions using the sensor system

- Calculate systematic offset by averaging the position errors

The math is straightforward - for each hole, calculate the error between commanded and actual position, then average all 4 errors to get the systematic offset: X_offset = (δx₁ + δx₂ + δx₃ + δx₄) / 4

This approach gives us redundancy through 4 measurement points, accounts for spindle runout and machine flex, and provides self-validation. Once calibrated, we apply the correction: corrected_position = target_position - calculated_offset

Essentially, we’re creating a mini coordinate measuring system using the gantry’s own capabilities. The crosshair gets us into the ballpark, but this calibration system gets us to precision.

What do you think about this approach for handling the router bit offset issue?

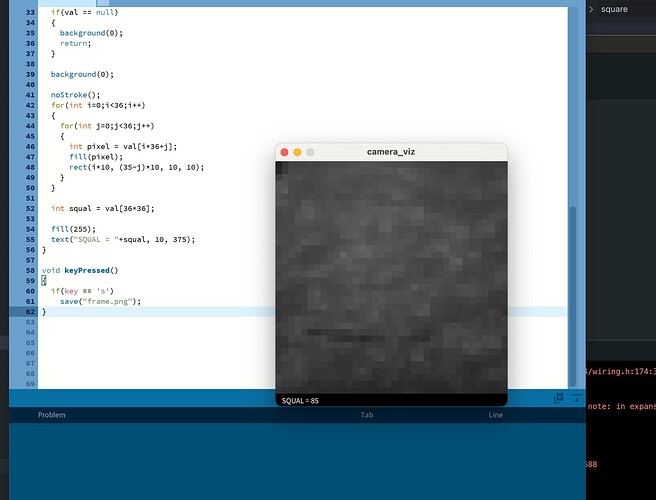

I made a Claude demo, so you can take a look at it: https:// claude.ai/public/artifacts/59bfa2f4-7291-4f02-8ed2-a3a8c140654a